All articles

Voice AI Matures as Providers Prioritize Speed, Reliability, and System Design

Abhishek Sharma, Senior Technical Product Marketing Manager at Telnyx, explains why Voice AI’s real advantage now comes from the systems built around the models.

Key Points

As the core components of Voice AI become more affordable, the pressure grows for companies to find an advantage beyond the model layer.

Abhishek Sharma, Senior Technical Product Marketing Manager at Telnyx, explains why the systems that can deliver speed, reliability, and consistent performance are at a distinct advantage.

By solving for latency, building a true memory layer, and treating data as core infrastructure, Voice AI can become the preferred channel for customers.

Sustainable differentiation will be in the infrastructure. As the core technology gets commoditized, what cannot be commoditized is how a company uses it.

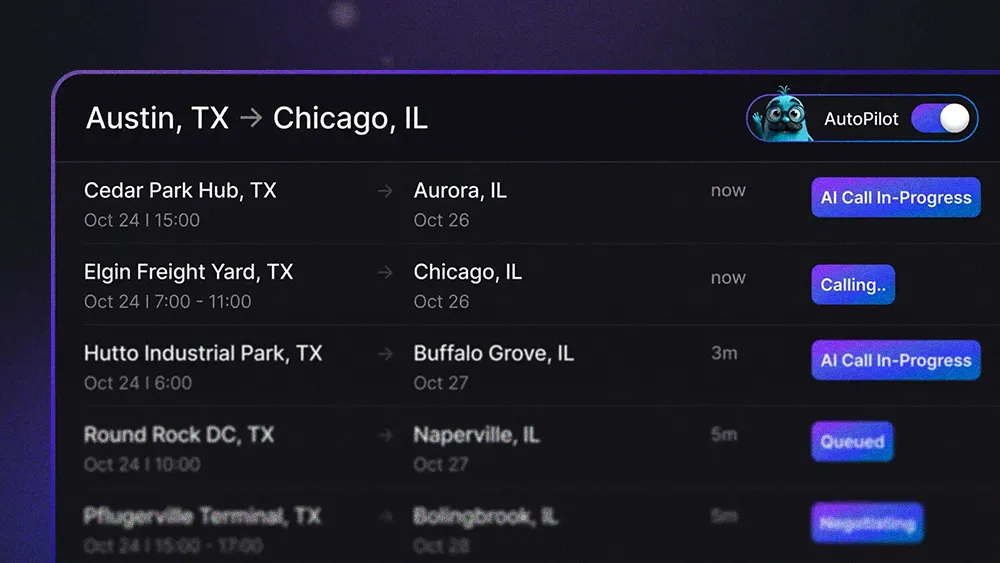

The Voice AI stack is racing toward commodity status as speech-to-text, text-to-speech, and the LLMs enter a cycle of steady price drops. As the underlying models become more affordable and widely available, industry leaders are shifting their focus from the models themselves to the systems that support them. The companies pulling ahead are the ones investing in infrastructure that delivers real speed, real reliability, and real business impact.

According to Abhishek Sharma, Senior Technical Product Marketing Manager at conversational AI company Telnyx, this trend is already reshaping the competitive landscape. With over 6 years of experience, Sharma has a strong track record of driving significant growth in revenue, user acquisition, and engagement for companies ranging from startups to major corporations like Microsoft. Today, he specializes in data-driven GTM strategies, product positioning, and targeted digital campaigns that have consistently yielded high returns and market traction.

As AI becomes widely available, a more defensible strategy lies in how that technology is deployed, Sharma explains. "Sustainable differentiation will be in the infrastructure. As the core technology gets commoditized, what cannot be commoditized is how a company uses it," Sharma says.

Cheaper by the cycle: Costs across the Voice AI stack continue to fall, with each new release from major providers coming in cheaper and more efficient than the last. Open source models are also becoming easier for teams to run at minimal expense. "Everything on the model side is getting cheaper each cycle," says Sharma. "The advantage will not come from the model. It will come from what a company builds around it."

Solving for latency is the first step in building an infrastructure advantage. Sharma says that requires addressing both technical bottlenecks and the more subtle challenges of user perception. On the engineering side, using private servers and dedicated points of presence can reduce network hops and shave a noticeable 100–120 milliseconds off response times. But the real trick is mastering "perceived latency," where designers might intentionally add a slight pause or sound cue—like the clicking of a keyboard—to make an AI interaction feel more natural.

The adoption ladder: "I see Voice AI adoption happening in three layers. The first is simple replacement: using AI for customer support. The next layer is about creating new revenue streams, like using an AI agent for upselling. But the deepest, most transformative layer is when Voice AI becomes part of the company's core identity, creating an AI-first business model," Sharma explains.

Ditching the duct tape: Advancing through these layers relies on treating data as a core piece of infrastructure. "The memory layer is increasingly important. If you speak to a voice AI assistant today, tomorrow it remembers the context from your previous interaction. This memory layer allows enterprises to resolve issues faster and provide consistent experiences. Right now, the method involves storing data in cloud buckets, but it isn’t fast enough; it’s more like patching things together with tape."

The idea of a memory layer is becoming a concrete demand from sophisticated enterprise buyers, who require deep personalization for the advanced use cases in the deeper layers of adoption.

Details on demand: "A MENA-based enterprise might want an Egyptian Arabic voice instead of a Gulf accent. Enterprises are increasingly focused on these nuances—accents, memory, and other fine-grained personalization," says Sharma. "The demand for such features will only continue to increase as companies explore how to use voice AI for more sophisticated use cases."

No more hold music: That focus on efficiency points toward a key objective where the goal of Voice AI isn't to perfectly mimic a human, but to be demonstrably more efficient. "Success rate is the biggest metric, specifically satisfaction rate. For instance, if you call an airline to rebook a flight and the AI assistant resolves it in under two minutes, you’ll prefer the assistant over waiting 35–45 minutes for a human. As more people realize that voice AI can solve problems efficiently, customer preference will shift."

The ultimate measure of success, Sharma stresses, is whether the system solves a customer's problem so effectively that it becomes the preferred channel—a trend he says is already validated by major investments. "That’s why VCs are investing heavily. The size of recent Series A rounds makes it clear that customer service is still an unsolved problem. Once voice AI shows it can truly deliver, the industry will shift toward it," Sharma concludes.